123b offers a novel approach to language modeling. This architecture utilizes a deep learning design to generate coherent output. Developers at Google DeepMind have developed 123b as a powerful instrument for a variety of NLP tasks.

- Implementations of 123b cover text summarization

- Adaptation 123b requires large corpora

- Effectiveness of 123b exhibits impressive results in benchmarking

Exploring the Capabilities of 123b

The realm of large language models is constantly evolving, with new contenders pushing the boundaries of what's possible. One such model that has garnered significant attention is 123b . This powerful AI system, developed by developers, boasts a staggering number of parameters, allowing it to execute a wide range of activities. From creating creative text formats to providing responses to complex questions, 123b has demonstrated remarkable capabilities.

One of the most compelling aspects of 123b is its ability to interpret and create human-like text. This expertise stems from its extensive training on a massive collection of text and code. As a result, 123b can engage in natural conversations, compose stories, and even convert languages with fidelity.

Moreover, 123b's adaptability extends beyond text generation. It can also be applied for tasks such as abstraction, inquiry response, and even code generation. This comprehensive range of capabilities makes 123b a essential tool for researchers, developers, and anyone interested in exploring the opportunities of artificial intelligence.

Fine-Tuning 123B for Specific Tasks

Large language models like 123B possess tremendous potential, but their raw power can 123b be further harnessed by fine-tuning them for targeted tasks. This process involves training the model on a curated dataset aligned to the desired application. By doing so, we can enhance 123B's performance in areas such as text summarization. The fine-tuning process allows us to adapt the model's weights to represent the nuances of a particular domain or task.

As a result, fine-tuned 123B models can deliver higher quality outputs, rendering them valuable tools for a broad spectrum of applications.

Benchmarking 123b Against Existing Models

Evaluating the performance of 123b against existing language models entails a compelling opportunity to gauge its strengths and limitations. A thorough analysis process involves analyzing 123b's performance on a suite of standard tasks, encompassing areas such as text generation. By employing established benchmarks, we can systematically evaluate 123b's comparative performance within the landscape of existing models.

Such a analysis not only sheds light on 123b's capabilities but also enhances our knowledge of the broader field of natural language processing.

Design and Development of 123b

123b is a enormous language model, renowned for its advanced architecture. Its design incorporates numerous layers of neurons, enabling it to process immense amounts of text data. During training, 123b was exposed a abundance of text and code, allowing it to master complex patterns and generate human-like content. This intensive training process has resulted in 123b's exceptional capabilities in a variety of tasks, demonstrating its efficacy as a powerful tool for natural language processing.

Ethical Considerations in Developing 123b

The development of sophisticated AI systems like 123b raises a number of pressing ethical issues. It's critical to meticulously consider the possible consequences of such technology on humanity. One key concern is the possibility of bias being incorporated the system, leading to inaccurate outcomes. Furthermore , there are questions about the interpretability of these systems, making it hard to grasp how they arrive at their results.

It's essential that developers prioritize ethical guidelines throughout the whole development cycle. This demands guaranteeing fairness, responsibility, and human control in AI systems.

Amanda Bynes Then & Now!

Amanda Bynes Then & Now! Yasmine Bleeth Then & Now!

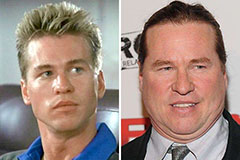

Yasmine Bleeth Then & Now! Val Kilmer Then & Now!

Val Kilmer Then & Now! Tonya Harding Then & Now!

Tonya Harding Then & Now! Jaclyn Smith Then & Now!

Jaclyn Smith Then & Now!